In the last couple of posts, we have talked about how application systems need a change application system around them to manage the changes to the application system itself. A “system to manage the system” as it were. We also talked about the multi-part nature of application systems and the fact that the application systems typically run in more than one environment at any given time and will “move” from environment to environment as part of their QA process. These first three posts seek to set a working definition of the thing being changed so that we can proceed to a working definition of a system for managing those changes. This post starts that second part of the series – defining the capabilities of a change application system. This definition will then serve as the base for the third part – pragmatically adopting and applying the capabilities to begin achieving a DevOps mode of operation.

DevOps is a large problem domain with many moving parts. Just within the first set of these posts, we have seen how four rather broad area definitions can multiply substantially in a typical environment. Further, there are aspects of the problem domain that will be prioritized by different stakeholders based on their discipline’s perspective on the problem. The whole point of DevOps, of course, is to eliminate that perspective bias. So, it becomes very important to have some method for unifying the understanding and discussion of the organizations’ capabilities. In the final analysis, it is not as important what that unified picture looks like as it is that the picture be clearly understood by all.

To that end, I have put together a framework that I use with my customers to help in the process of understanding their current state and prioritizing their improvement efforts. I initially presented this framework at the Innovate 2012 conference and subsequently published an introductory whitepaper on the IBM developerWorks website. My intent with these posts is to expand the discussion and, hopefully, help folks get better faster. The interesting thing to me is to see folks adopt this either as is or as the seed of something of their own. Either way, it has been gratifying to see folks respond to it in its nascent form and I think the only way for it to get better is to get more eyeballs on it.

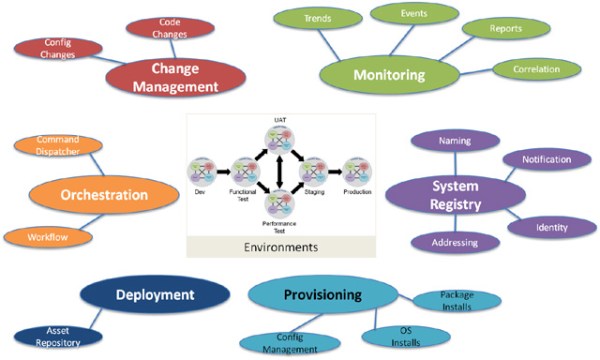

So, here is my picture of the top-level of the capability areas (tools and processes) an organization needs to have to deliver changes to an application system.

The quality and maturity of these within the organization will vary based on their business needs – particularly around formality – and the frequency with which they need to apply changes.

I applied three principles when I put this together:

- The capabilities had to be things that exist in all environments that application system runs (ie dev, test, prod, or whatever layers exist). THe idea here is that such a perspective will help unify tooling and approaches to a theoretical ideal of one solution for all environments.

- The capabilities had to be broad enough to allow for different levels of priority / formality depending on the environment. The idea is to not burden a more volatile test environment with production-grade formality or vice-versa. But to allow a structured discussion of how the team will deliver that capability in a unified way to the various environments. DevOps is an Agile concept, so the notion of minimally necessary applies.

- The capabilities had to be generic enough to apply to any technology stack that an organization might have. Larger organizations may need multiple solutions based on the fact that they have many application systems that were created at different points in time, in different languages, and in different architectures. It may not be possible to use exactly the same tool / process in all of those environments, but it most certainly is possible to maintain a common understanding and vocabulary about it.

In the next couple of posts, I will drill a bit deeper into the capability areas to apply some scope, focus, and meaning.